Megha Kalia

AI Researcher | PhD

AI Researcher | PhD

I’m an AI Applied Scientist, designing and deploying deep learning models for on-device AI in wearables—spanning time-series modeling, agentic systems, and MLOps.

I am a PhD with 8+ years of experience in digital health, computer vision, and AI across industry, academia, and startup consulting. Previously, I was a Research Scientist and Postdoctoral Fellow at Harvard Medical School, working on surgical automation, self-supervised learning, and medical robotics. I’ve collaborated with Canon, Zeiss, and completed research internships at Intuitive Surgical and Bosch.

My academic training spans top universities across four continents, supported by prestigious scholarships and international fellowships. My work has been recognized with multiple Best Paper Awards at leading medical imaging and robotics conferences.

Education:

Ph.D. University of British Columbia · Masters IIT Kharagpur · Fellowships Postdoc (Harvard Medical School), DAAD (Technical University of Munich), Friedman (New York University)

Research Interests:

DeepLearning, time-series modelling , Computer Vision, LLMs, Diffusion Models

Outside work:

Biking Running Reading

A CrewAI multi-agent system that processes grocery receipts using Google Gemini's computer vision to extract and match items against a household inventory, then auto-generates a prioritized weekly shopping list based on what you have vs. what you need. \n Tech Stack Next.js 15 · Python/Flask · Google Gemini 2.5 Flash · CrewAI · Vercel + Render

Self-supervised monocular pose estimation framework that jointly learns scene depth and relative camera motion directly from raw video sequences without requiring ground-truth annotations. By leveraging photometric and geometric consistency constraints within a PyTorch-based deep learning pipeline, the system provides a scalable, data-efficient solution for vision-driven motion estimation.

This project explores how much delay people can tolerate between what they see and what they feel in a Mixed Reality environment. Built in **Unity3D (C#)**, the system integrates an **HTC Vive** headset for immersive VR and a **3D Systems Touch** haptic device for force feedback. I programmatically introduced controlled delays between visual and tactile events to measure the point at which users begin to notice desynchronization. The goal is to better understand human tolerance to latency and provide practical insights for designing responsive robotics and haptic systems for metaverse and MR applications. (Done as part of Friedman Scholarship, New York Univerisity, USA)

Our method adopts a generation and segmentation strategy to learn a segmentation model with better generalization capability to domains that have no labelled data. The method leverages the availability of labelled data in a different domain. The generator does the domain translation from the labelled domain to the unlabeled domain and simultaneously, the segmentation model learns using the generated data while regularizing the generative model. Presented at MICCAI 2021 (Oral, Top 13%).

The project aims to provide enhanced vision to the surgeons for better surgical planning and accuracy. A patient’s MRI is deformably registered to the phantom. 3D mesh (pink) generated from MRI and the MRI plane is visible in the image. Transverse-plane of the MRI can is controlled using the surgical instrument. Notice how the MRI plane changes with the surgical instrument. At many locations tumor locations in red can be seen on the MRI plane.

Self-supervised direct pose estimation for vision-based tracking in navigated bronchoscopy.

Computers in Biology and Medicine, 197, 110958.

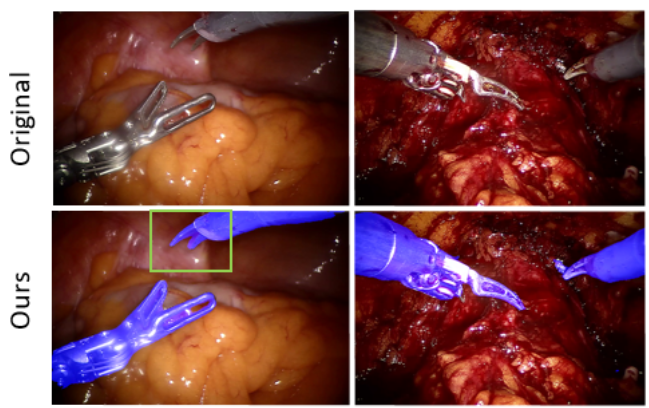

Augmented reality guidance for robot-assisted laparoscopic surgery.

(Doctoral dissertation, University of British Columbia)

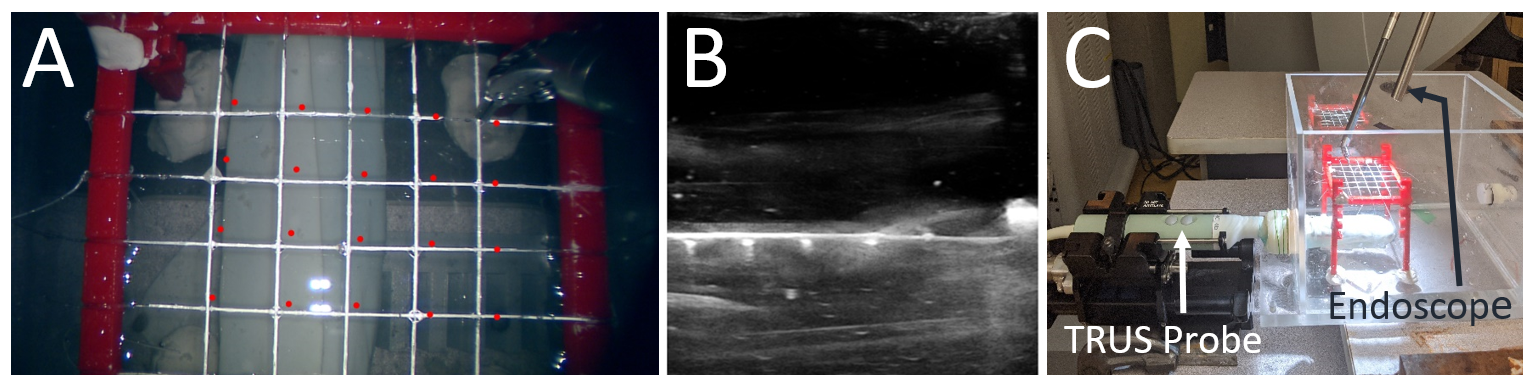

Towards transcervical ultrasound image guidance for transoral robotic surgery

International Journal of Computer Assisted Radiology and Surgery , 18(6), 1061-1068.

Transcervical Ultrasound Image Guidance System for Transoral Robotic Surgery

Clinical Orthopaedics and Related Research (CoRR)

A Deep Learning Based Approach for Camera Switching in Amateur Ice Hockey Game Broadcasting

International Conference on Signal Processing and Information Communications. Paris.

Co-Generation and Segmentation for Generalized Surgical Instrument Segmentation on Unlabelled Data

International Conference on Medical Image Computing and Computer-Assisted Intervention (pp. 403-412). Springer, Cham.

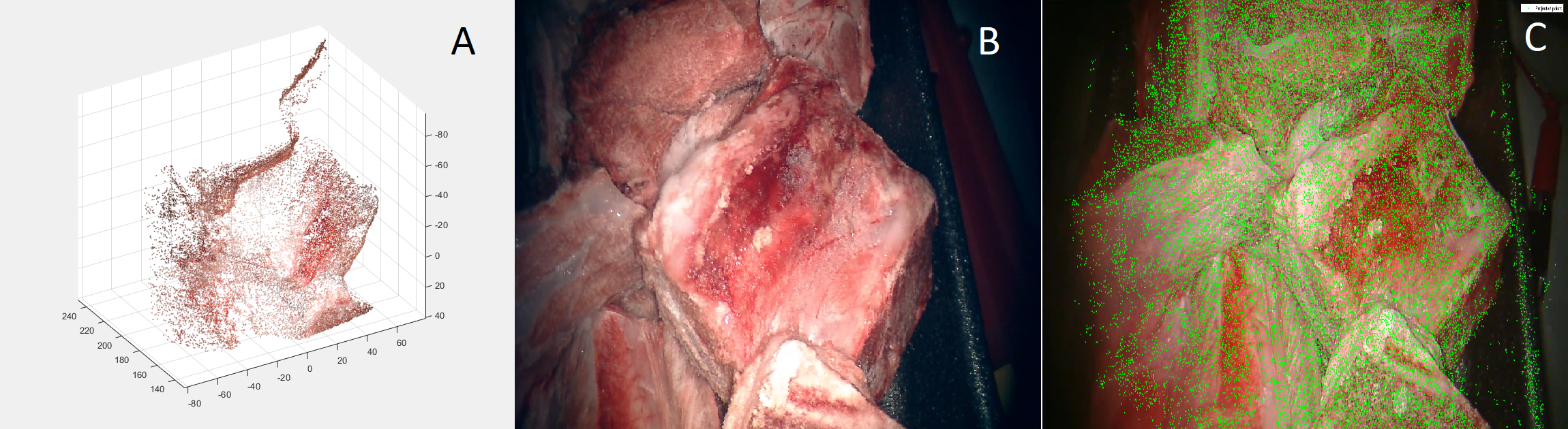

Preclinical evaluation of a markerless, real-time, augmented reality guidance system

International Journal of Computer Assisted Radiology and Surgery, 1-8.

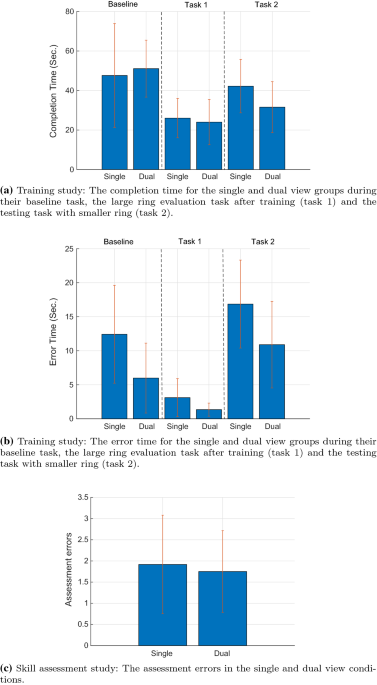

A multi-camera, multi-view system for training and skill assessment for robot-assisted surgery

International journal of computer assisted radiology and surgery, 15, 1369-1377.

Evaluation of a marker-less, intra-operative, augmented reality guidance system

International Journal of Computer Assisted Radiology and Surgery, 15, 1225-1233.

Marker‐less real‐time intra‐operative camera and hand‐eye calibration for surgical AR

Healthcare technology letters, 6(6), 255-260.

A real-time interactive AR depth estimation technique for surgical robotics

In 2019 International Conference on Robotics and Automation (ICRA) (pp. 8291-8297). IEEE.

A Method to Introduce & Evaluate Motion Parallax with Stereo for Medical AR/MR

In 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR) (pp. 1755-1759). IEEE.

Interactive depth of focus for improved depth perception

In International Conference on Medical Imaging and Augmented Reality (pp. 221-232). Springer, Cham